Note: “We” throughout this post refers to Jim Smith working alongside Claude Code.

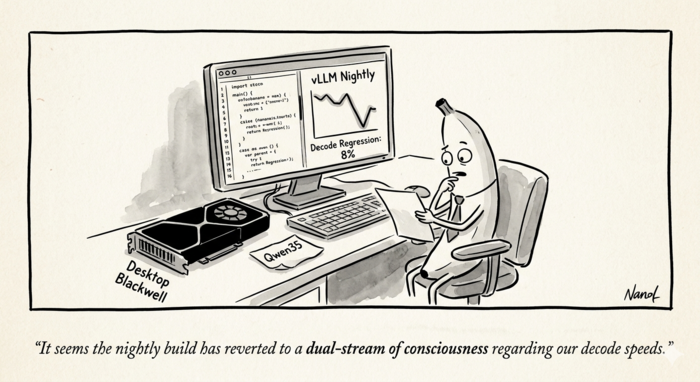

This morning my 87-year-old mother texted me that TJ Maxx had tried to charge her 6% sales tax on a clothing purchase in Pennsylvania. Clothing isn’t taxed in PA. She caught it at the register and saved herself the 6%. A few hours later, sitting in front of some vLLM benchmarks, I caught something that rhymed: an 8% loss in token generation speed that everyone on the moving nightly tag has been quietly paying. Same instinct, different register tape.

TL;DR

We noticed our Qwen3.5-35B-A3B serving container on an RTX PRO 6000 Blackwell Max-Q was decoding at 183 tok/s while a sibling container on an RTX 5090 hit 225 tok/s. We chalked it up to the 5090’s higher memory bandwidth — until a controlled test exposed the real story: the regression wasn’t the GPU, it was the vLLM nightly image. Running the exact same configuration on the PRO 6000 with a pinned mar23 nightly bumped decode from 183 → 198.6 tok/s, an 8% jump from changing only the image tag.

We bisected through 315 vLLM commits between the two image builds, narrowed to three suspects, and found the culprit: PR #38152, an 8-line revert of dual-stream execution for Qwen3 and Qwen3.5 input projections. The PR is unusually candid — it deliberately gave back hot-path decode throughput to fix a 4x cold-compile-time regression, with a TODO to re-enable once PyTorch 2.11 and #38123 land.

Three options if you’re hit by this: pin the older image, overlay the file to restore the parallel-stream branch, or wait for the proper fix. This post walks through the bisection and the tradeoff.

The setup

We run two vLLM containers side by side on a workstation with two Blackwell GPUs: an RTX PRO 6000 Blackwell Max-Q (97 GB, SM 12.0) and an RTX 5090 (32 GB, same SM). Both serve the same 35B-parameter hybrid MoE model, cyankiwi/Qwen3.5-35B-A3B-AWQ-4bit, through the vllm/vllm-openai:cu130-nightly image. One container stays pinned to a known-good digest; the other rides the moving nightly tag so we can test patches against the latest Triton, FlashInfer, and vLLM changes.

The pinned sibling on the 5090 has been humming along at around 225 tokens per second of single-stream decode. The latest-nightly container on the PRO 6000 was posting 183 tok/s. We had been telling ourselves this was just the 5090’s higher memory bandwidth showing up — decode is memory-bound, and the 5090’s ~1.8 TB/s beats the PRO 6000 Max-Q’s ~1.6 TB/s. A ~13% gap felt about right.

It wasn’t right. The difference wasn’t the GPU. It was the nightly.

The apples-to-apples test

The trick was to break the comparison into two steps.

Step 1: move the sibling’s exact configuration to the PRO 6000. We copied the sibling’s docker-compose verbatim, changed nothing except CUDA_VISIBLE_DEVICES=0 and the port, and pointed the mount at the same pinned digest (vllm/vllm-openai@sha256:923cbdaf..., which maps to cu130-nightly-mar23). Single-stream decode on the PRO 6000 jumped from 183 to 198.6 tok/s. That closed most of the gap to the 5090’s 225 tok/s, which is now just the memory-bandwidth story we had originally told ourselves, at a believable ~12%.

Step 2: change only the image, not the configuration. Same container, same GPU, same PR #37700 chunk_o overlay, same --gpu-memory-utilization 0.5, same --max-num-seqs 8. Only the image tag changed: mar23 → latest cu130-nightly.

| Image | Single | Par 2 | Par 4 | Par 8 |

|---|---|---|---|---|

| cu130-nightly-mar23 | 198.3 | 342.3 | 653.9 | 1119.1 |

| cu130-nightly (current) | 183.5 | 298.7 | 582.0 | 1030.8 |

Roughly 8% slower on single-stream decode, widening at higher concurrency. Not a config artifact. Not a hardware story. The nightly got slower.

Narrowing the window

Image versions revealed two vLLM commits at the endpoints:

- mar23:

vllm 0.18.1rc1.dev32+g1f0d21064 - current:

vllm 0.18.2rc1.dev54+g73f48ce55

Torch and Triton were identical (2.10.0+cu130 and 3.6.0 respectively). FlashInfer moved 0.6.6 → 0.6.7, but its kernels are not on the GDN decode path for this model. Between those two vLLM commits: 315 changes.

We filtered git log down to paths that actually touch the Qwen3.5-35B-A3B-AWQ decode hot loop — fused MoE, FLA kernels, the Qwen3.5/Qwen3-Next model files, the GPU model runner, and the sampler. That dropped the candidate list to roughly 80 commits. Three stood out immediately:

a8eab8f30“Extract GatedDeltaNetAttention into shared layer for Qwen3Next and Qwen3.5” — a 2,000-line refactor.b779eb336“Sync upstream BT=chunk_size fix for GDN chunk_fwd_kernel_o, simplify warmup to single pass” — touches the exact kernel we’d been trying to tune.9704a5c31“Disable dual stream execution of input projection for Qwen3.”

The last one was an 8-line diff in vllm/model_executor/models/qwen3_5.py. That was the culprit.

The culprit: PR #38152

Before the change, the GDN block’s input projection looked like this:

mixed_qkvz, ba = torch.ops.vllm.gdn_in_proj(

hidden_states,

sum(self.in_proj_qkvz.output_sizes) // self.tp_size,

sum(self.in_proj_ba.output_sizes) // self.tp_size,

self.prefix,

)

After:

mixed_qkvz, _ = self.in_proj_qkvz(hidden_states)

ba, _ = self.in_proj_ba(hidden_states)

The removed custom op wasn’t just a convenience wrapper. It dispatched the two projection GEMMs on two parallel CUDA streams, overlapping them. The replacement runs them sequentially on the default stream. Every GDN layer, every decode step, pays twice the launch latency and loses the overlap.

PR #38152’s own description is unusually candid about the reason:

Currently dual stream execution requires custom ops that pass the layer_name as a string. This will regress cold compile times by ~4x. So this PR temporarily reverts dual stream optimization in Qwen3 and Qwen3.5 models.

TODO: Re-enable dual stream after #38123 and upgrade to Pytorch 2.11.

So this wasn’t a correctness fix or a cleanup. It was a deliberate runtime-perf regression accepted to fix a compile-time regression. That is a reasonable call when the alternative is four-times-longer cold starts in CI — but the tradeoff lands on every Qwen3/Qwen3.5 user served from a nightly between the revert and whatever future torch 2.11 + #38123 combo re-enables the optimization.

Why it matters more on this model than you’d think

Qwen3.5-35B-A3B is a hybrid. Not every layer is GDN; many are vanilla attention over an MoE. But the GDN blocks are on the critical path of every decode step, and the input projection runs once per block per token. Removing the parallel-stream dispatch means dozens of additional serial waits per generated token. At 183 tok/s versus 198 tok/s, a decode step is roughly 5.0 ms versus 5.5 ms — the 0.5 ms delta is entirely credible as stream-serialization overhead across a hybrid model’s GDN layers.

The other two suspect commits probably aren’t innocent — b779eb336’s “simplify warmup to single pass” could be choosing worse autotuner configs, and the GDN refactor might have introduced a small per-step Python cost — but they are orders of magnitude smaller contributors than the dual-stream revert.

What to do about it

Three options, cheapest first.

Pin the image. If you aren’t chasing a specific new feature, vllm/vllm-openai@sha256:923cbdaf… (cu130-nightly-mar23) gives the faster decode path today. The sibling container already does this and it’s why it’s faster.

Overlay the revert-of-the-revert. vLLM’s model files are pure Python. A five-line file overlay that restores the torch.ops.vllm.gdn_in_proj branch in qwen3_5.py (and its qwen3_next.py twin) gives a recent nightly the old speed back, at the cost of the compile-time regression that motivated #38152 — perfectly fine for a long-running serving deployment, painful for CI.

Wait. #38152’s TODO is real. Once vLLM’s plumbing (#38123) and PyTorch 2.11’s compile infrastructure land, dual-stream execution should come back without the compile-time penalty. That’s the clean fix and the one you want if you don’t need the speed back this week.

Takeaways

Two lessons worth writing down.

First, nightly “slower than it was last month” is not always a phantom. Docker image tags are moving targets and two builds can legitimately diverge by 8% on the exact same GPU, driver, and command line. Pin something you trust and diff against it.

Second, performance regressions often look like tradeoffs, not bugs. #38152 is honest about what it is — a cold-start fix that gives back some hot-path throughput. The signal isn’t “somebody broke decode.” The signal is “somebody accepted a tax on decode to pay a bigger bill elsewhere.” Finding these requires reading the PR body, not just the diff.

The code is the same. The speed is different. The difference is a decision.