I recently ran five Large Language Models through the legal gauntlet known as LegalBench. I wanted to see if any of the new Qwen 3.5 models could displace my goto local legal reasoning model of GPT-OSS:120b. That’s 161 legal reasoning tasks and roughly 30,000 samples covering everything from spotting contract clauses to dissecting M&A agreements. That’s about 150,000 local inference calls that ran overnight on 4 GPUs.

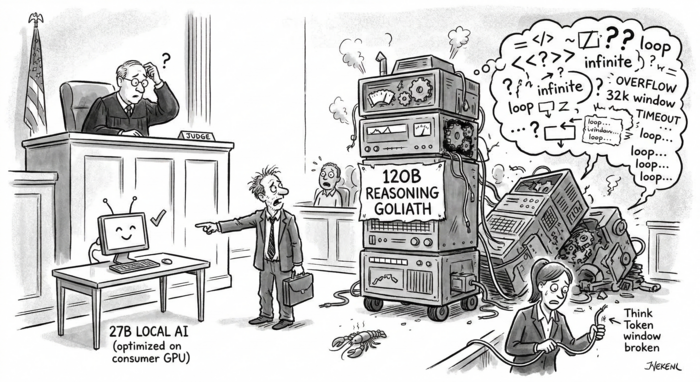

The prevailing wisdom in the AI space is that you need a massive, power-hungry model to handle complex legal reasoning. The results say otherwise. Spoiler alert: A 27B parameter model, squeezed into 4-bit quantization and running locally on consumer hardware, outperformed a 120B heavyweight by 6 points.

The Contenders

All models were evaluated using exact-match evaluation (balanced accuracy or F1) via few-shot prompts, without any fine-tuning or retrieval tricks.

| Model | Parameters | Hardware Setup | The “Catch” |

|---|---|---|---|

| gpt-oss-120b | 120B | RTX 6000 Blackwell Pro QMax | A massive reasoning model. |

| Qwen3.5-27B-AWQ | 27B | RTX 5090 | 4-bit quantization, thinking disabled. |

| Qwen3.5-35B-AWQ | 35B (3B active) | RTX 5090 | 4-bit quantization, thinking disabled. |

| Nemotron-30B-NVFP4 | 30B (3B active) | RTX 5090 | 4-bit quantization, thinking disabled. |

| Qwen3.5-9B-AWQ | 9B | RTX 5070 Ti | 4-bit quantization, thinking disabled. |

The Scoreboard

| Rank | Model | Overall Score | Individual Tasks Won |

|---|---|---|---|

| 1 | Qwen3.5-27B | 0.7936 | 74 |

| 2 | Qwen3.5-35B | 0.7612 | 27 |

| 3 | Qwen3.5-9B | 0.7583 | 35 |

| 4 | gpt-oss-120b | 0.7313 | 23 |

| 5 | Nemotron-30B | 0.5509 | 2 |

The 27B model won decisively. Despite being 4 to 14 times larger than the local models, the 120B behemoth limped into fourth place.

Meanwhile, Nemotron-30B seems to be playing a different game entirely. It completely fell apart on multi-class tasks, enthusiastically answering “Yes” to questions that explicitly asked it to choose a letter. We appreciate the optimism, but not the accuracy.

It is also worth highlighting the tiny 9B model. It won more tasks than the 35B model and scored only 0.3 points below it overall. For a model that fits on a single consumer GPU, that is genuinely impressive.

Where They Shine (and Stumble)

- Issue Spotting is Qwen’s Love Language: The 27B model dominated here, scoring 0.886 compared to the 120B’s 0.649. These tasks rely heavily on pattern matching at scale, and the Qwen models are clearly superior at following instructions.

- The Heavyweight Thinks Well: The gpt-oss-120b model finally earned its keep on “Conclusion” tasks (like diversity and personal jurisdiction), scoring a competitive 0.843 against the 27B’s 0.853. When genuine, multi-step reasoning is required, the larger model adds value.

- Nobody Knows the Rules: Rule-based tasks, like predicting specific case citations or answering citizenship questions, proved difficult across the board. The highest score was a dismal 0.541 by the 35B model. Memorizing legal knowledge is apparently just as hard for silicon brains.

The “Think Mode” Trap

Qwen models feature an optional “think” mode that forces step-by-step reasoning before answering. In theory, this sounds perfect for legal work. In practice, it’s a trap when running benchmarks at scale.

It’s worth highlighting a stark contrast here. Because gpt-oss-120b is natively a reasoning model, its internal thought process remained disciplined and never interfered with providing a final, properly formatted answer. However, when the optional reasoning mode was enabled for any of the Qwen 3.5 models, things frequently went off the rails. Their thinking processes would routinely overrun a massive 32,000-token window, get stuck in infinite output loops, or simply have to be hard-killed for exceeding a 5-minute timeout on a single query.

- 35B-Think: Turning this on improved scores by about 3 to 4 points, but bloated the inference time from under a second to 5 seconds per sample. Across 30,000 samples, that turns a few hours of work into several days.

- 9B-Think: Completely unusable. The model effectively lost its mind, rambling so much that it chewed through its entire token budget and routinely forgot to output the actual answer.

The Final Verdict

- Qwen3.5-27B: The undeniable sweet spot for legal work. It requires about 16GB of VRAM with AWQ quantization, wins the most tasks by a landslide (74), and takes the top overall score.

- Qwen3.5-9B: The ultimate budget pick for high-volume inference or limited VRAM. It fits on a single GPU and scores within 3.5 points of the champion.

- gpt-oss-120b: Keep this on hand only if your application specifically focuses on complex, multi-step legal determinations like statutory entailment or jurisdiction analysis.

- Nemotron-30B: Skip it. Just skip it.

Ultimately, model size is a terrible proxy for legal reasoning ability. For contract analysis, clause detection, and issue spotting, smaller, well-quantized models running locally aren’t just cheaper—they’re better.

Reference Methodology: Evaluations were conducted using the collaborative benchmark LegalBench, consisting of 161 tasks and ~30K samples. All scores represent balanced accuracy or F1 via exact-match evaluation. All models were served locally via vLLM on RTX 6000 Blackwell, RTX 5090, or RTX 5070 Ti depending upon VRAM requirements.